The autonomous quantum lab

Why the future of quantum computing will run itself.

We just announced a milestone with EeroQ and NVIDIA. An AI agent ran a quantum computing experiment on real hardware from a single plain-English prompt. Multiple parameter sweeps, real-time plots, logged results. No researcher at the controls.

It is a proof of concept. It is a signal of where the field is heading.

What is an autonomous quantum lab?

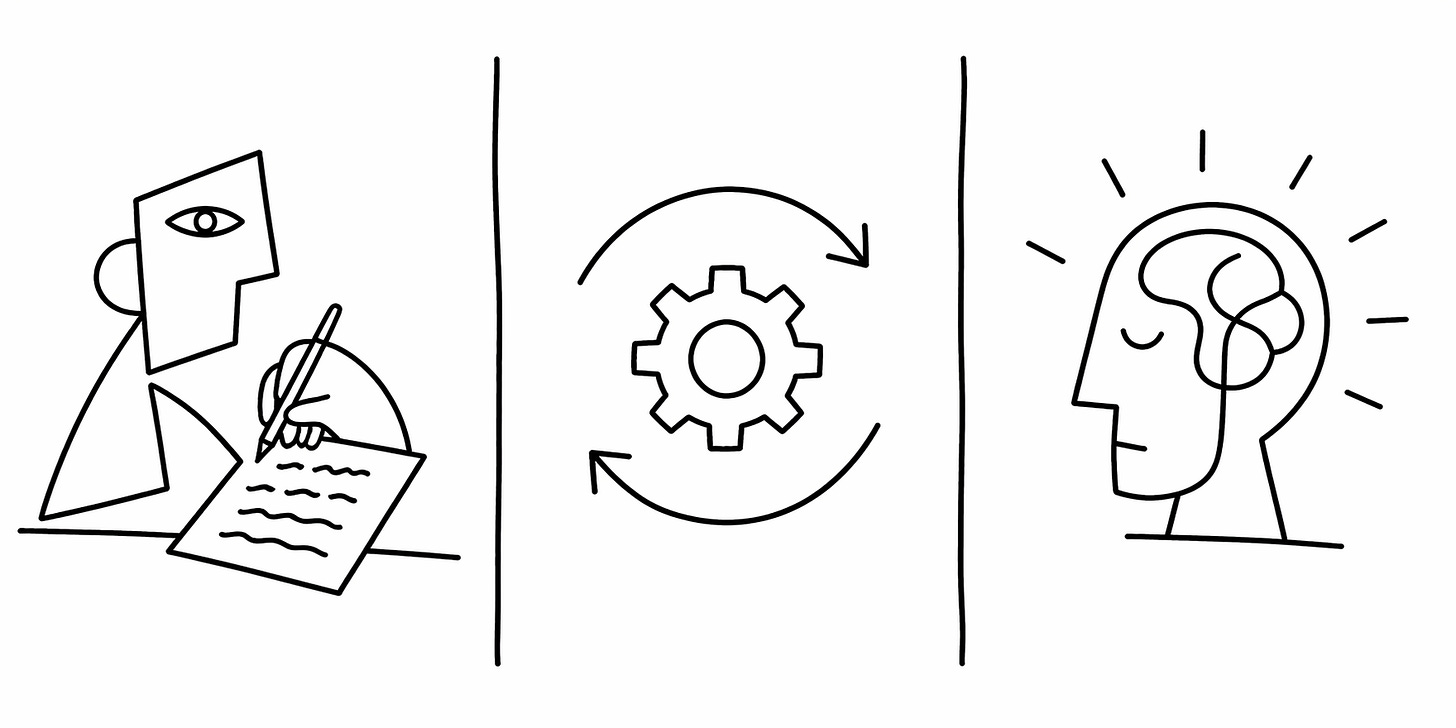

In a traditional lab, every experiment requires a human in the loop. Writing control sequences, configuring instruments, running measurements, interpreting output, adjusting, repeating. The cycle time is set by human attention and human sleep schedules.

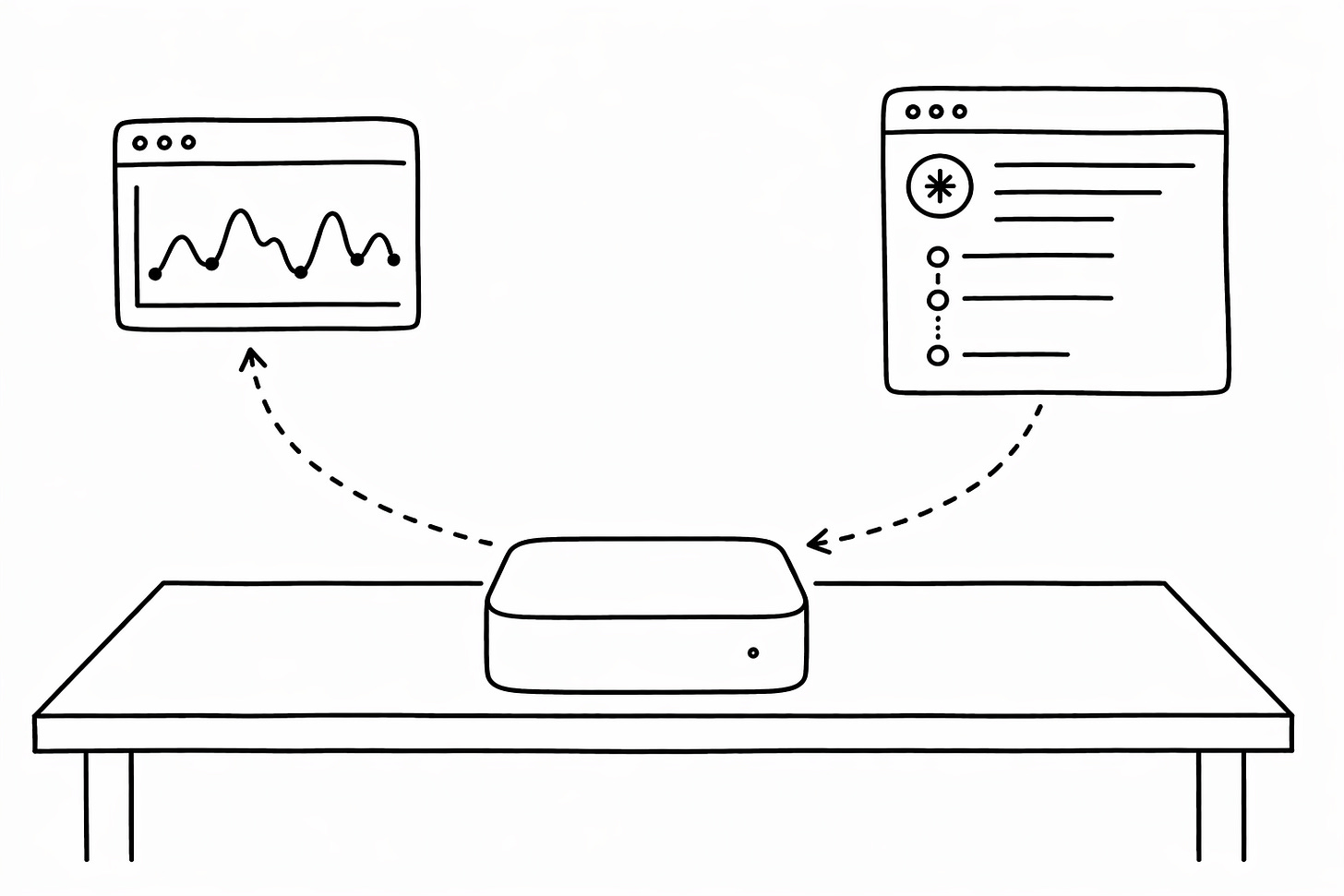

An autonomous lab replaces that loop with an AI agent. The human defines the objective. The agent connects to hardware, runs the experiment, interprets results, and decides what to do next.

In our demonstration, the agent performed an electron trapping and detection protocol on an EeroQ electron-on-helium chip. Specifically, the agent controlled voltages applied to electrodes on the chip (beneath the helium) to move electrons between various regions while measuring the tiny electrical signals arising from the electron motion. In this process the agent interacted across parameters and produced real-time data plots, all without human intervention.

Quantum computing labs sit on a spectrum of automation. Most are closer to the manual end than anyone would like to admit.

Scripted. A researcher writes code to interface with instruments in the lab. A human designs the experiment, runs the code, and interprets results by hand. Most labs sit here.

Automated. Software handles calibration and data analysis, following an expert-designed workflow.

Autonomous. The agent designs experiments, runs them, interprets data, debugs failures, and iterates. Our demonstration sits at this level.

Algorithms first, agents second

The argument is strong for calibration to start with defined routines, not agents. Signal processing tools analyse the data. Active learning and efficient search algorithms decide which parameters to try next. The process is predictable, fast, reliable, and easy to debug when something fails.

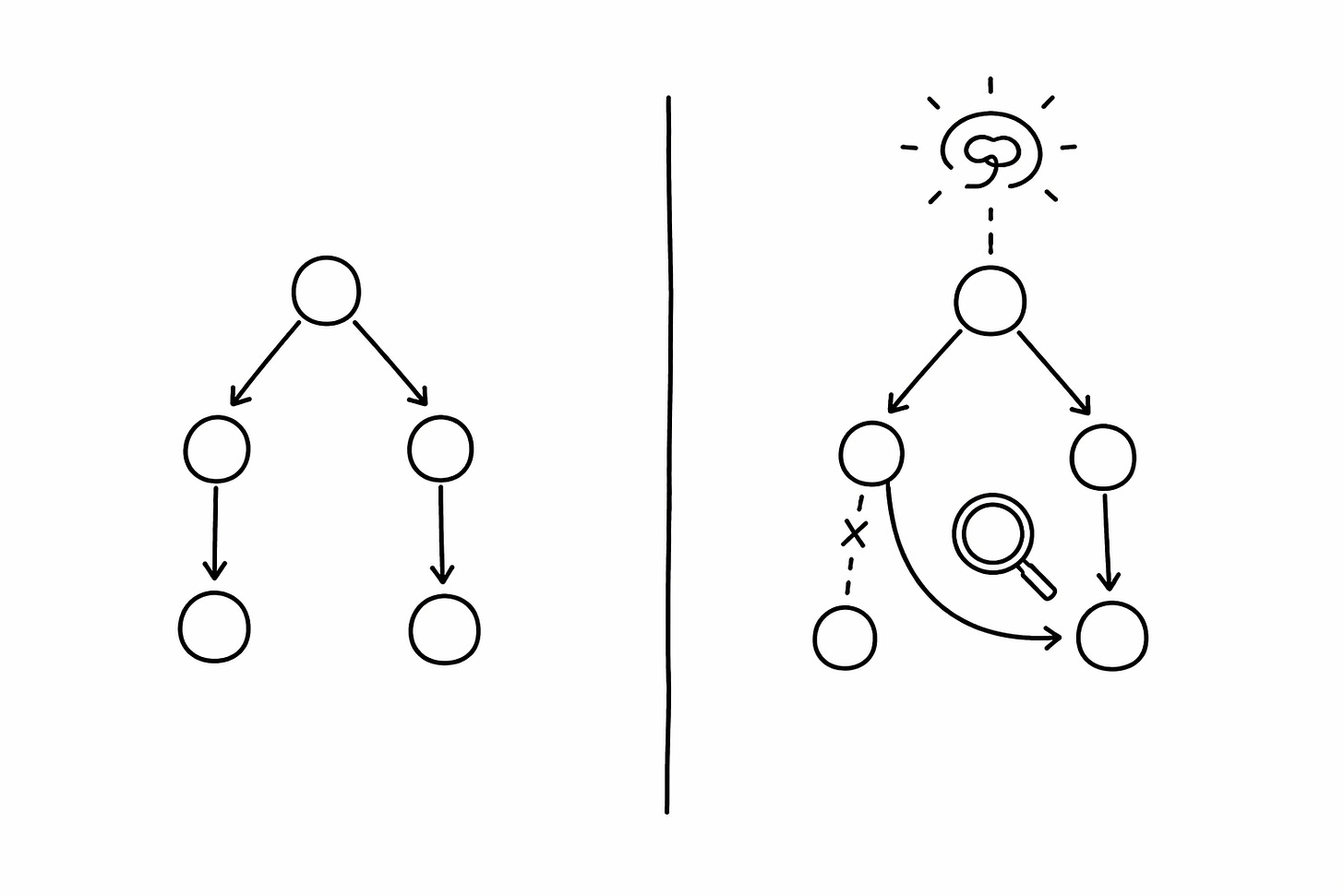

This is how quantum control software works today. It follows a defined calibration workflow: each node runs a measurement, evaluates the result, and moves to the next step. When a step fails, you know where and why. Better analysis tools at each node mean better calibration outcomes across the board.

But defined routines have a ceiling. They follow the graph you give them. They cannot discover a better graph. They cannot tell you whether a failure came from a bad calibration routine or a broken device. They do not ask whether the wiring is wrong or the trap needs cleaning.

This is where agents add value. An agent can sit above the calibration routines, observe patterns across runs, and propose changes to the graph itself. When a routine fails, the agent does not just retry. It diagnoses whether the problem is in the routine or in the hardware. Algorithms handle the known path. Agents handle the unknown.

Why it matters: autonomy at scale

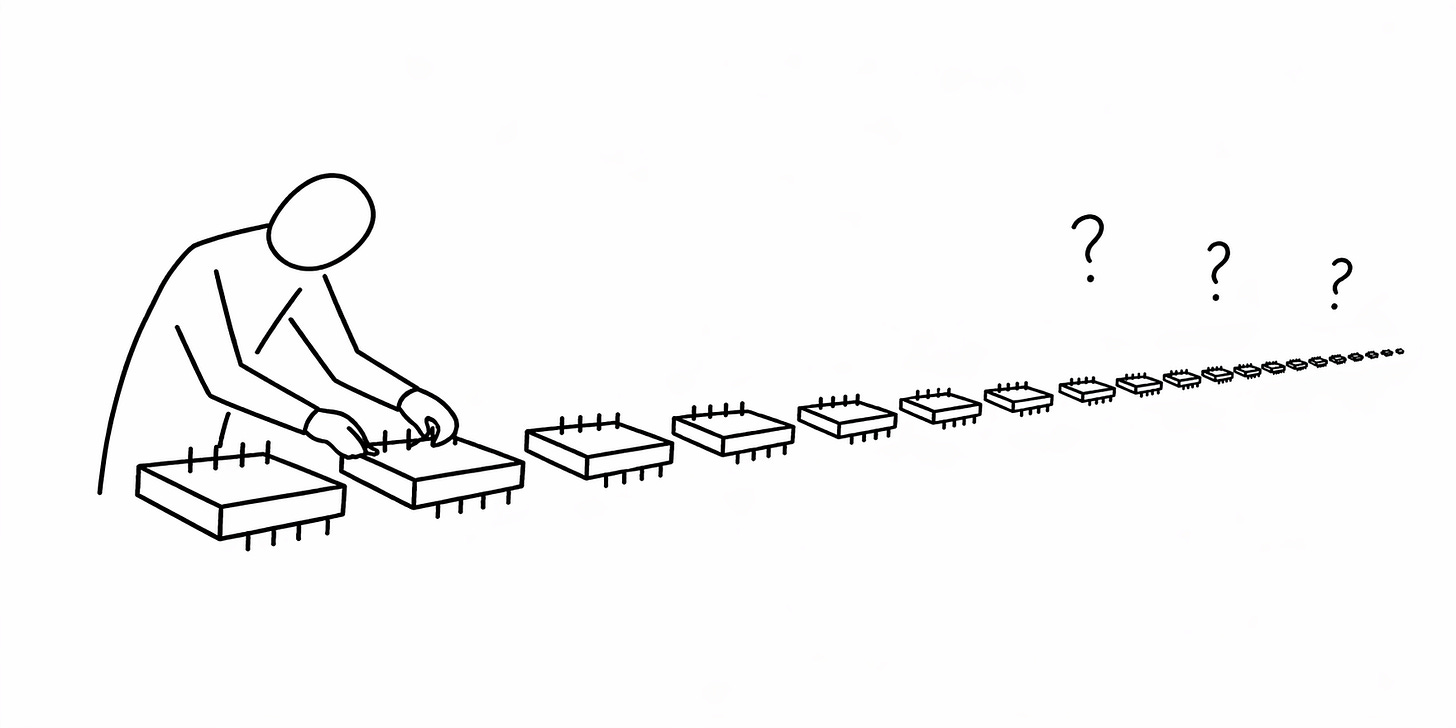

The calibration burden scales with device count. A researcher can calibrate one small device in an afternoon. Ten devices take multiple weeks. At 1,000 qubits, manual recalibration becomes impossible. The problem is both difficulty and time. The hours do not exist.

At that scale, agents are essential. They take over when expert-led automation fails. They identify which steps run in parallel and which order yields the best fidelity. When something fails, they cross-reference the failure against historical data, device characteristics, and lab conditions. Every action, measurement, and decision gets logged. The record is permanent, searchable, and available to the whole team. When a researcher leaves, the knowledge stays.

More than experiments

A quantum lab is also an operations problem. Someone tracks which attenuators and coax lines are wired to which fridge ports. Someone orders replacements. Someone books fridge time, fills the nitrogen trap, updates the wiki, and watches for ground loops. In most labs, that someone is also supposed to be doing science.

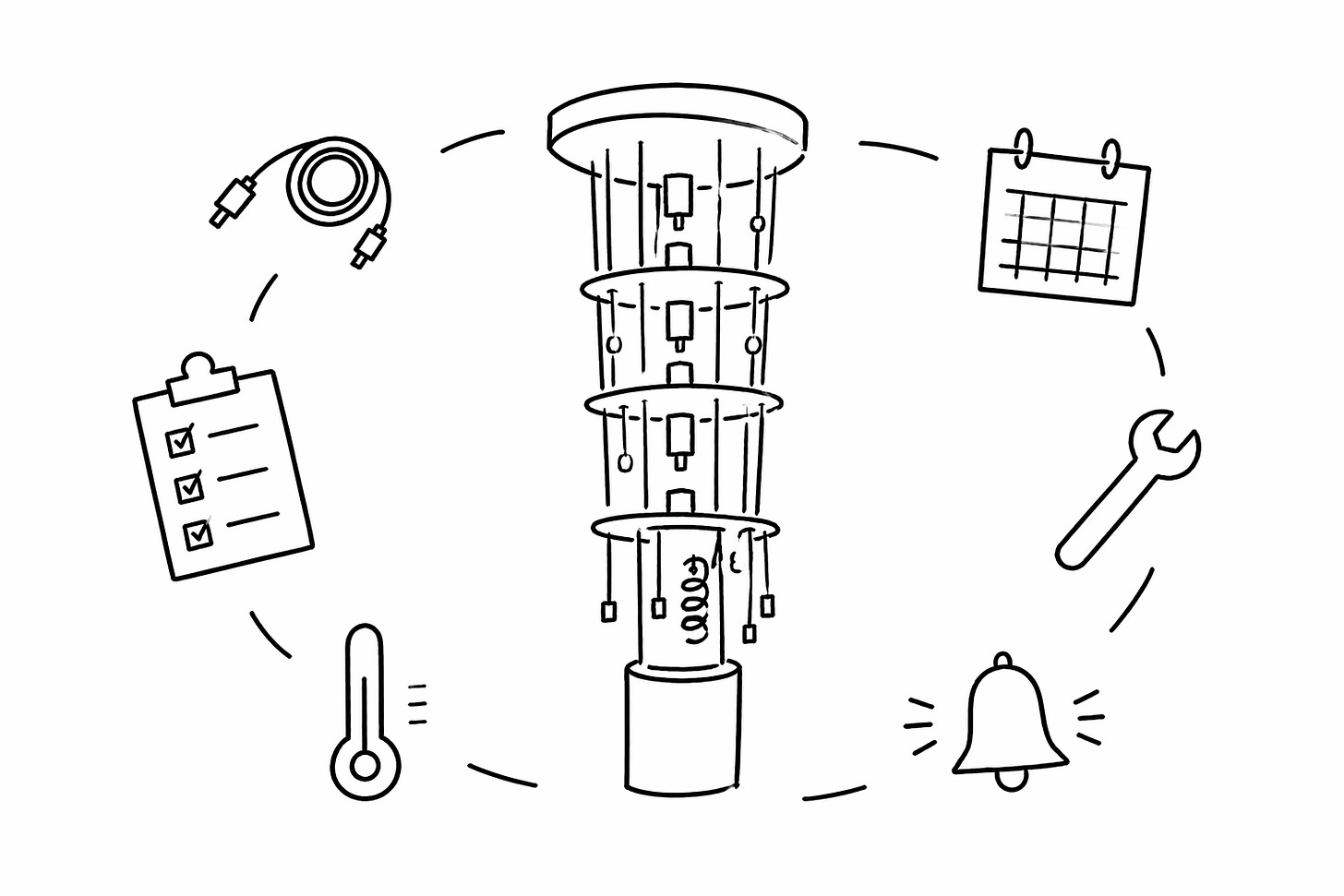

An autonomous lab manages all of it. Configurable wiring diagrams for each fridge. Inventory tracking with automatic purchase orders for lines and attenuators. Nitrogen trap fill notifications. Fridge bookings and experiment scheduling that update themselves.

The power is in connecting operations to knowledge. The lab’s entire knowledge base, notebooks, wiki, and operating procedures become context for every decision. When a fridge measurement looks off, or a calibration routine fails, the system cross-references current lab state, past logs, and operating procedures. It might find the failure is due to elevated temperature in the fridge, then debug why. It recommends a throughput test, a trap cleaning, or a service visit. It does not just record what happened. It knows what to do about it.

Qubit metrics like T1, T2, and gate fidelity get logged alongside environment data. When performance drops, you correlate it with a temperature excursion or a new ground loop, not a guess. Every data point from every device feeds back into the lab’s operating memory.

The labs that scale will be the ones where this overhead runs on software. Software that handles the overhead so researchers can do science. The greatest breakthroughs in quantum computing will be led by machine intelligence. We built the first proof of concept. The next step is making it standard.

Link to the QCalEval Benchmark Paper.

Link to the release from NVIDIA.

Link to the release with EeroQ.

Conductor Quantum is building quantum superintelligence. We are an American company headquartered in San Francisco, California. We are assembling a team of hardcore engineers whose sole focus is to develop quantum superintelligence. If you would like to solve one of the hardest technological challenges of our time, this is your chance. Join us.